In Part 1 of this post, we discussed cracking Text Extraction with high accuracy, in all kinds of CV formats. In this post, we are going to talk about Information Extraction, which is another such problem that requires higher order intelligence to solve.

What is Information Extraction?

According to Wikipedia,

Information extraction (IE) is the task of automatically extracting structured information from unstructured and/or semi-structured machine-readable documents.

A typical resume can be considered as a collection of information related to — Experience, Educational Background, Skills and Personal Details of a person. These details can be present in various ways, or not present at all. In the next section, we talk about some of the challenges that make Information Extraction in a resume particularly hard.

The Challenges in Information Extraction

Keeping up with the vocabulary used in resumes is a big challenge. A resume consists of company names, institutions, degrees, etc. which can be written in several ways. For eg. Skillate:: Skillate.com — Both these words refer to the same company but will be treated as different words by a machine. Moreover, every day new companies and institute names come up, and thus it is almost impossible to keep the software’s vocabulary updated.

Even if somehow we manage to maintain the vocabulary, it is impossible to account for different meanings of the same word. Consider the following two statements:

‘Currently working as a Data Scientist at Skillate’

‘Skillate is an HR Tech. company with a focus on Artificial Intelligence’

In the former statement, “Skillate” will be tagged as a company as the statement is about working there. But the latter does not tell us about the experience of a person, so “Skillate” should be considered as a normal word and not as a company. It is evident that the same word can have different meanings, based on its usage.

Our Approach — Deep Information Extraction

The Concept of Deep Information Extraction

The above challenges make it clear that statistical methods like Naive Bayes are bound to fail here, as they are severely handicapped by their vocabulary and fail to account for different meanings of words. So how do we crack this seemingly hard problem? We turn to Deep Learning to do all the hard work for us! We call this approach — Deep Information Extraction.

A thorough analysis of the challenges posed makes it evident that the root of the problem here is understanding the context of a word.

Consider the following statement

‘2000–2008: Professor at Universitatea de Stat din Moldova’

It is quite likely that you wouldn’t have understood the meaning of all the words in the above statement, but we are sure that even if you don’t understand the exact meaning of these words, you can probably guess that since “professor” is a job title, “Universitatea de Stat din Moldova” is most likely the name of an organization.

Now consider one more example, with two statements:

‘2000–2008: Professor at IIT Kanpur’

‘B.Tech in Computer Science from IIT Kanpur’

Here, IIT Kanpur should be treated as an Employer Organisation in the former statement and as an Educational Institution in the later. We can differentiate between the two meanings of ‘IIT Kanpur’ here by observing the context. The first statement has ‘Professor’ which is a Job Title, indicating that IIT Kanpur be treated as a Professional Organisation. The second one has a degree and major mentioned, which point towards IIT Kanpur being tagged as an Educational Organisation.

Applying Deep Learning to solve Information Extraction greatly helped us to effectively model the context of every word in a resume.

Methodology to Information Extraction

To be specific, Named Entity Recognition (NER) is the algorithm we applied deep learning to, for Information Extraction in the resumes.

More Formally:

“NER is a subtask of information extraction that seeks to locate and classify named entity mentions in unstructured text into pre-defined categories such as the person names, organizations, locations, etc, based on context.”

Through the examples mentioned above, it should be clear that NER is a very domain specific problem, and thus we were required to build our own deep learning model from scratch.

Steps to building a model for Information Extraction

For building our own model, the first step was to decide the model architecture. We went through a lot of research papers and other literature on NLP and decided to make use of LSTMs (a type of Neural Network) in our model, as it takes into account the context of a word in a statement. Once the entire architecture was agreed upon, we started working on curating a dataset for model training and evaluation. This step was the most cumbersome process, and we suggest anyone who wants to build their own deep learning model should start thinking about this part from a very early stage.

We tried several automated ways to generate the training data, but our model wasn’t performing well. We had our eureka moment when we finally sat down and went through the entire data! We realised that the training data that we were creating with codes was itself very ambiguous and had no coherence whatsoever.

We then prepared an unlabelled training data and searched for tools online which would help us in collaborating the manual annotation efforts within our team. A small PoC was done within the company where we labelled the relatively small dataset ourselves and trained the model over it. As expected, we started getting great results out of the model. We tracked the model performance using the F-1 score. This score which was lurking near the 20s suddenly rocketed to 60 and above! We knew we were on the right track. We hired some people to do the data labelling and started the training process. By the end of this process, the model had crossed our benchmark of 80.0!

Data labelling for NER

The task of data labelling seems trivial and lowly, but it actually gave us such an insight into the performance of our model which no other research paper could give.

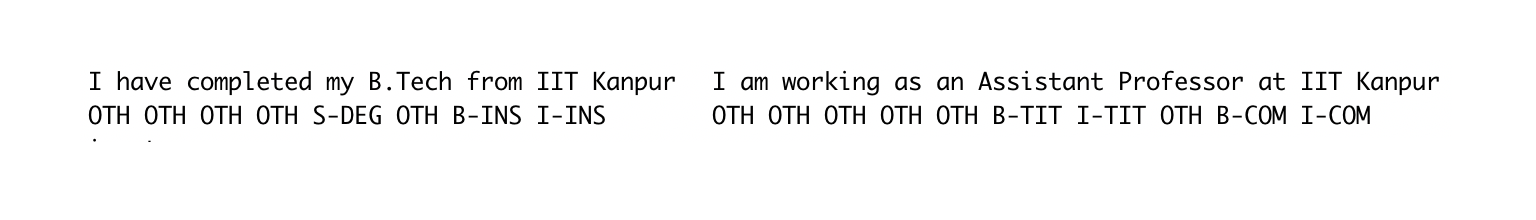

Below is a snippet from our NER model results. It shows how our model is able to recognize and differentiate the different meanings of the phrase ‘IIT Kanpur’ in different contexts. Each word has a corresponding label.

Our extensive work on Text Extraction (Part 1) and Information Extraction reaped great results and we built a parser which is robust enough and is able to challenge even the best resume parsers in the world. We currently parse resumes in English and are working towards adding multilingual capabilities to our parser.

What’s Next!

We at Skillate are constantly working towards making life easier for our users through smart products. A Chatbot to save the time and effort wasted in getting basic details of candidates, a JD Assistant to assist recruiters in writing better Job Descriptions, are some of the products we are currently working on.

Constant technological advancements have been happening in the field of AI and we are inclined towards leveraging these developments in our products. So stay tuned for our future posts!

Thank You